Good News! AI-first Warp Terminal is Now Open Source

Warp has open-sourced its terminal client. The code is now on GitHub, and the company wants the community involved in building it out going forward, but the contribution model looks nothing like you would expect from an open source project.

They say that the main bottleneck in development right now is no longer writing code but the human-led tasks such as deciding on features and verifying the behavior of a piece of software.

They are looking towards agents to handle the implementation, while human contributors focus on ideas, spec work, and review. The developers are now confident enough that Oz-generated code, guided by their own rules and verification processes, puts contributors in a good position to get features right.

If you didn't know, Oz is Warp's cloud agent orchestration platform, announced earlier this year, which lets you run multiple coding agents in parallel in the cloud with full visibility and control over what they're doing.

Announcing this move, Zach Lloyd, the CEO of Warp, added that:

Open-sourcing is fundamentally coming from our desire to build a successful business. We are competing with other highly funded, closed-source competitors, and we think opening and providing the resources for the community to improve Warp is a smart way for us to accelerate product development.

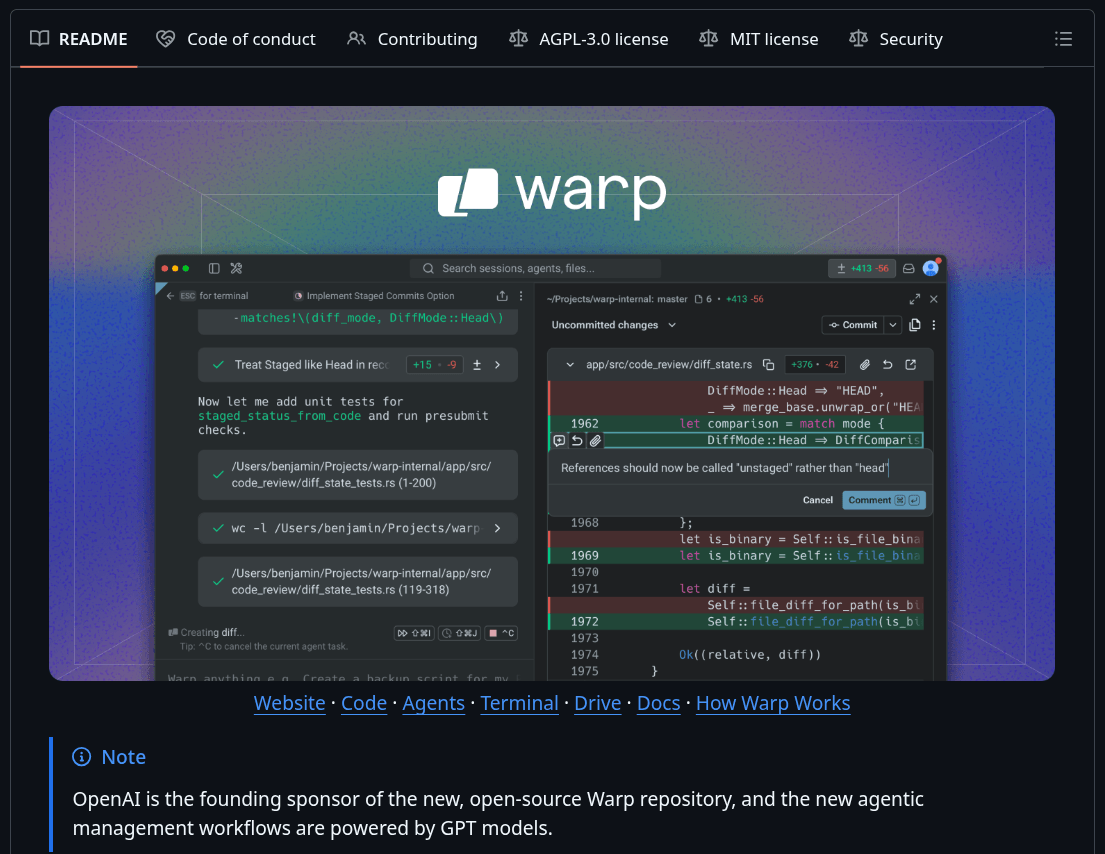

As a refresher, Warp (partner link) is a modern terminal and agentic development environment built in Rust. It runs on Linux, Windows, and macOS, with a block-based command interface and built-in support for AI coding agents like Claude Code, Codex, and Gemini CLI.

Get the sauce

The client codebase is now live at github.com/warpdotdev/warp, and the licensing is split depending on the component. The UI framework, consisting of warpui_core and warpui crates, is under the MIT license, while the rest of the codebase is under AGPLv3.

OpenAI is the founding sponsor of the repository, and the agentic contribution workflows are powered by GPT models. Keep in mind that other coding agents are welcome too, but Warp would rather you use Oz, which already has the right context and checks baked in for this workflow.

Warp is also expanding open source model support with this announcement, bringing in Kimi, MiniMax, and Qwen, plus a new "auto (open)" routing option that selects the best open model for a given task. A settings file for programmatic control and easier portability across devices is shipping too.

Suggested Read 📖: Ubuntu is Betting on AI

![]()

Source: It's FOSS