Getting Started Guide with Chains in LangChain

This post will demonstrate the process of using retrievers in LangChain.

How to Get Started With Chains Using the LangChain Module?

LangChain has the complete kit to build Large Language Models or LLMs which are not complex, however, the complex systems need chaining LLMs. To get started with chains using the LangChain modules, check out the following guide:

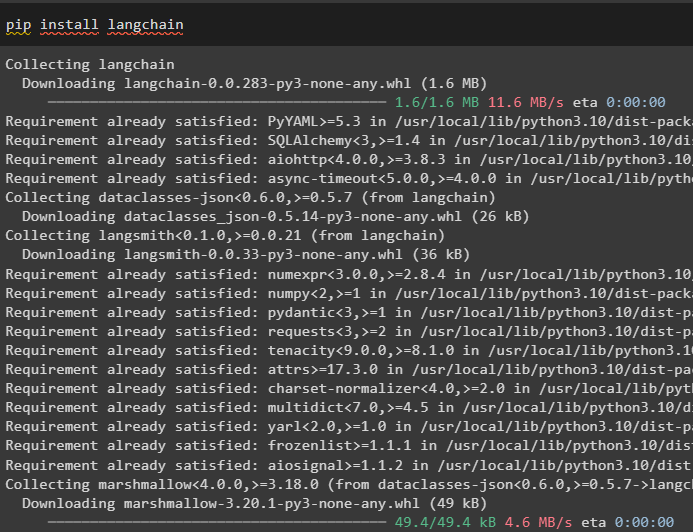

Step 1: Install Modules

First of all, install the LangChain module to start the process with chains:

After that, simply install the OpenAI module to create LLMs or Large Language Models:

Set up the environment using the OpenAI API key by calling the getpass() method in the “os” library:

import getpass

os.environ["OPENAI_API_KEY"] = getpass.getpass("OpenAI API Key:")

After executing the above code, simply paste the API key in the output and press enter to get on with the process:

Step 2: Using LLMChain

After setting up the OpenAI environment, import the OpenAI and PromptTemplate libraries to build the LLM using the OpenAI() method. Then, configure the PromptTemplate() method and define the variable using multiple arguments to ask a question from the model:

from langchain.prompts import PromptTemplate

#building the LLM using the OpenAI() method

llm = OpenAI(temperature=0.9)

prompt = PromptTemplate(

input_variables=["product"],

template="What can be a good title for business making {product}",

)

After building the chains, simply run the chain() method with the value of the variable used in the query to print the answer on the screen:

chain = LLMChain(llm=llm, prompt=prompt)

print(chain.run("clothes"))

Step 3: Using LLMChain With Multiple Variables

This step uses the same template as the previous one with a slight change. This guide uses multiple variables to execute the command and then define the values while executing the chain:

input_variables=["business", "prod"],

template="What is a good name for {business} that makes {prod}",

)

chain = LLMChain(llm=llm, prompt=prompt)

print(chain.run({

'business': "ABC Startup",

'prod': "clothes"

}))

Step 4: Using the Chat Model

The following code uses the chat model to build or configure the LLM and builds the prompt template for human messages:

from langchain.prompts.chat import (

ChatPromptTemplate,

HumanMessagePromptTemplate,

)

#Building Prompt template using the Human Message() method with the structure of the input

human_message_prompt = HumanMessagePromptTemplate(

prompt=PromptTemplate(

template="What can be a good title for business making {prod}?",

input_variables=["prod"],

)

)

chat_prompt_template = ChatPromptTemplate.from_messages([human_message_prompt])

chat = ChatOpenAI(temperature=0.9)

#Building LLMChain using its() method with multiple arguments

chain = LLMChain(llm=chat, prompt=chat_prompt_template)

print(chain.run("clothes"))

Executing the above code has displayed the chains of outputs as the answer for the query asked by the user:

That’s all about getting started with chains using the LangChain framework.

Conclusion

To get started with the chains in LangChain, simply get the modules for building the chains using the pip command. Then, import the libraries and build the LLMChain and prompt templates. After that, the user can configure the prompts with multiple variables and use the chat models in LLMs to execute chains. This post has elaborated on how to get started with the chains in LangChain.

Source: linuxhint.com